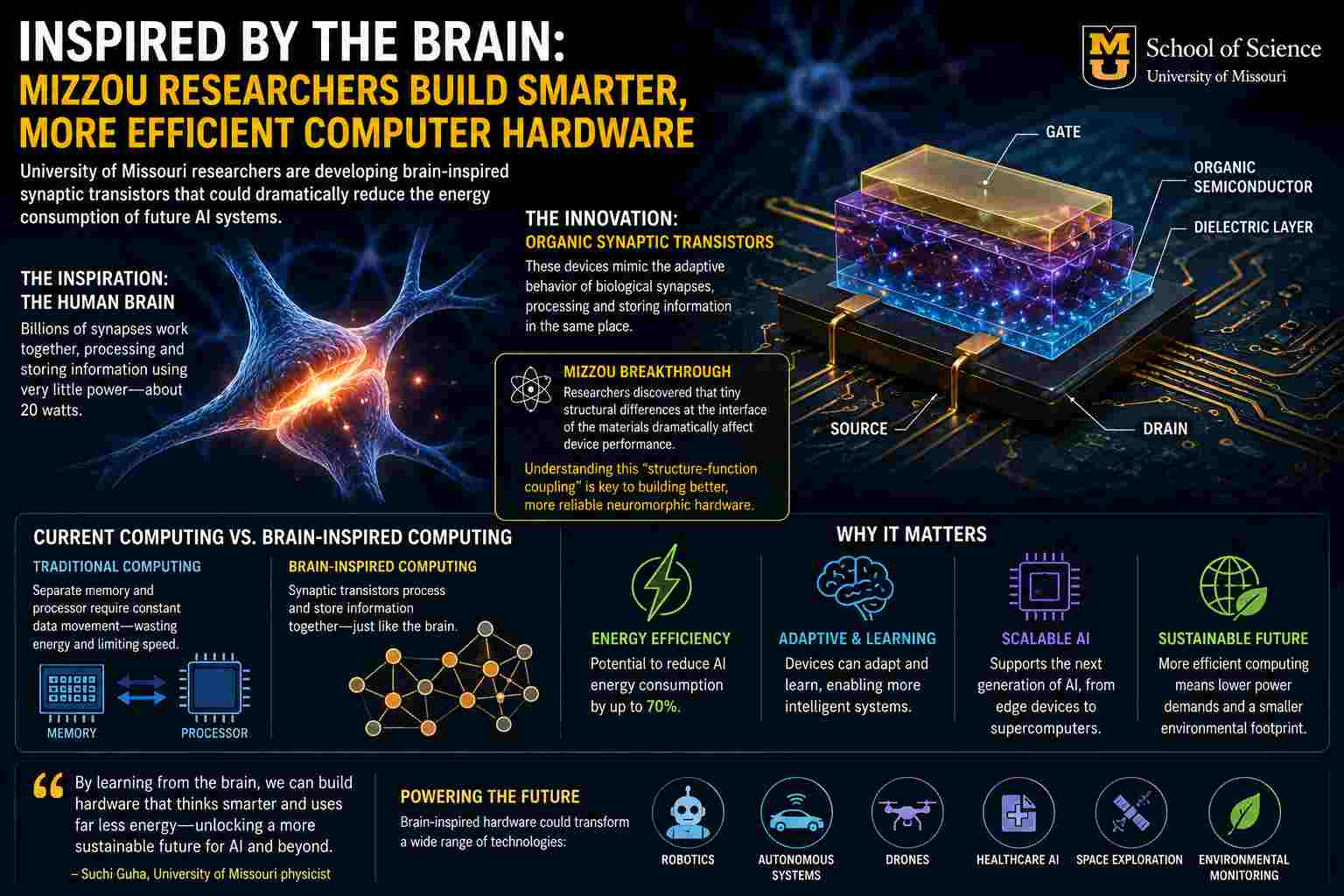

As artificial intelligence systems grow ever more complex and powerful, the computational infrastructure that supports them faces mounting challenges. Modern AI models consume vast amounts of electricity, much of it spent not on calculations themselves, but on moving data between processors and memory. At the University of Missouri, researchers are taking a novel approach inspired by the brain, the most efficient computer known. In recent research featured by Show Me Mizzou, physicist Suchi Guha and her team showed that small adjustments in material structure can significantly improve the performance of brain-like electronic devices called synaptic transistors. Their findings mark a key advance toward neuromorphic computing systems that could offer far greater energy efficiency for tomorrow’s AI workloads.

Beyond the limits of conventional computing

Traditional computing architectures rely on the decades-old von Neumann model, where processing and memory exist in physically separate locations. While effective for conventional workloads, this architecture creates a severe bottleneck for modern AI systems.

Every operation requires data to shuttle repeatedly between processors and memory banks, consuming significant energy and limiting scalability. As AI data centers grow larger, this inefficiency has become a major technological and environmental concern.

Neuromorphic computing seeks to solve this problem by emulating the architecture of biological neural systems, where memory and processing occur simultaneously within interconnected synapses.

“The brain remains the gold standard for efficient computation,” Guha explained in the university release.

The human brain performs extraordinarily complex cognitive operations using roughly 20 watts of power, far less than today’s AI accelerators and GPU clusters require for comparable tasks.

Synaptic transistors and organic electronics

The Mizzou team focused on developing organic synaptic transistors, electronic devices designed to mimic the adaptive behavior of biological synapses.

Unlike traditional transistors that merely switch electrical signals on and off, synaptic transistors can both process and retain information in the same physical structure. This capability allows them to emulate learning behavior directly in hardware.

The researchers investigated a family of organic copolymer materials based on pyridyl triazole structures, studying how nanoscale interface characteristics affect synaptic performance.

Their findings revealed that even when materials appear nearly identical chemically, tiny structural variations at the interface between the semiconductor and insulating layer can significantly alter device behavior.

This “structure-function coupling” is critical because neuromorphic systems depend heavily on stable, tunable electronic behavior across millions, or eventually billions, of artificial synapses.

The growing importance of neuromorphic hardware

The Mizzou research arrives amid rapidly intensifying global interest in neuromorphic computing.

Scientists worldwide are investigating memristors, analog neural architectures, and brain-inspired materials as alternatives to conventional CMOS scaling. Recent studies from institutions including the University of Cambridge and Purdue University suggest next-generation neuromorphic devices could reduce AI energy consumption by as much as 70% while improving adaptability and parallelism.

The underlying motivation is increasingly urgent.

AI training systems now consume megawatt-scale power levels, and global electricity demand from AI infrastructure is projected to rise sharply over the coming decade. Conventional scaling approaches alone are unlikely to sustain future growth.

Neuromorphic architectures offer a fundamentally different path forward.

Rather than executing rigid sequential instructions, brain-inspired systems process information through massively parallel networks of adaptive elements. This approach is especially promising for:

- Pattern recognition

- Autonomous systems

- Robotics

- Sensor fusion

- Edge AI computing

- Scientific simulation workloads

Materials science as the foundation of intelligent hardware

One of the most significant aspects of the Mizzou study is its emphasis on materials engineering rather than solely algorithmic optimization.

Neuromorphic computing is fundamentally constrained by device physics. Creating hardware that behaves like biological neural systems requires materials capable of analog switching, adaptive conductance, and low-power memory retention.

This places materials science at the center of future AI infrastructure development.

The Mizzou team demonstrated that interface quality, not simply chemical composition, plays a decisive role in determining synaptic behavior. These findings provide critical design principles for future neuromorphic hardware platforms.

The work aligns with broader trends in neuromorphic engineering, where researchers are increasingly integrating material physics, electronics, and neuroscience into unified computing architectures.

Implications for supercomputing and AI infrastructure

While neuromorphic systems remain in early development, their long-term implications for supercomputing could be profound.

Future HPC systems may integrate brain-inspired accelerators alongside traditional CPUs and GPUs to improve efficiency for AI-heavy workloads. Neuromorphic co-processors could dramatically reduce energy costs associated with machine learning inference, adaptive simulations, and real-time data analysis.

This shift would represent more than an incremental improvement in processor design.

It would signal a transition from deterministic computing architectures toward adaptive computational ecosystems modeled directly on biological intelligence.

Learning from biology

The Mizzou research highlights a broader transformation underway in computing science: the recognition that future computational breakthroughs may come not from forcing traditional architectures to scale further, but from rethinking computation itself.

Biological systems evolved extraordinarily efficient methods for processing information under strict energy constraints. Neuromorphic computing seeks to harness those same principles in silicon and organic electronics.

The path forward remains challenging. Large-scale manufacturing, reliability, programmability, and integration with existing AI frameworks all remain active areas of research.

Yet studies like the one from Mizzou suggest the field is steadily advancing toward practical, energy-efficient intelligent hardware.

As AI systems continue to grow, the future of computing may increasingly depend not on building bigger machines, but on building machines that think more like brains.

How to resolve AdBlock issue?

How to resolve AdBlock issue?