Most people associate clouds with precipitation like rain or snow, but clouds influence many atmospheric processes. Cloud formation is affected by turbulence, which creates fluctuations in wind speed, wind direction, temperature, humidity, and the concentration of water droplets in the air. However, those interactions are currently difficult to study.

Scott Salesky, an assistant professor of meteorology in the College of Atmospheric and Geographic Sciences at the University of Oklahoma, is leading research that will improve the way clouds are represented in weather and climate models. The five-year project is funded by a $763,930 Faculty Early Career Development (CAREER) Award from the National Science Foundation.

“Clouds have a very important influence on Earth’s climate,” said Salesky. “There’s a lot of focus on the role of greenhouse gases in climate, but clouds are also very important. Clouds can reflect solar radiation, and changes in cloud cover could be as important to climate as increases in greenhouse gases.

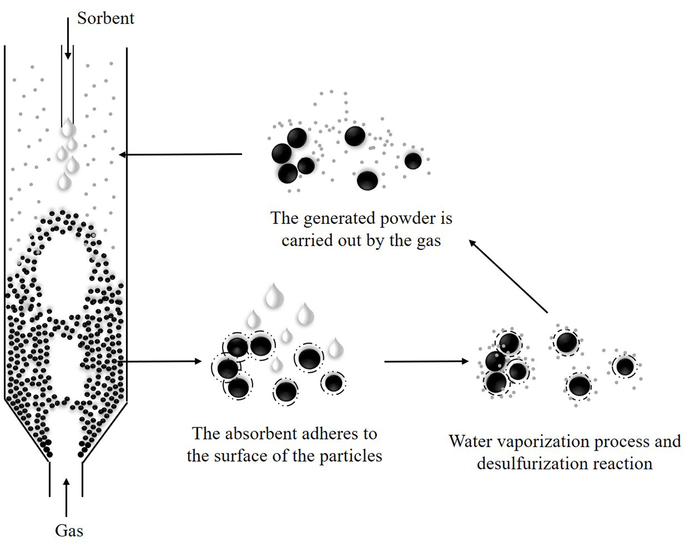

“The interesting thing about clouds is that there are a lot of processes that happen at different spatial scales that are all coupled together,” he added. “At very small scales, you have cloud droplets forming and growing, and they can interact with turbulence which can impact large-scale cloud properties, such as clouds’ lifetimes, how much sunlight they reflect (what we call albedo), and also the sizes of the droplets in the clouds, which can determine how quickly it might precipitate.”

This project will use simulations of small-scale interactions between millions of cloud droplets and turbulence and will allow the researchers to track the droplets’ positions, movements and sizes as they interact to form, grow and evaporate.

“When you run a weather or climate model, none of those small-scale processes are going to be resolved, so we’re learning how to accurately represent this small-scale droplet formation and growth and how it’s impacted by turbulence in large-scale weather and climate models,” Salesky said. “From this very small-scale information, we’re going to better understand interactions between turbulence and what we call the microphysics – droplet formation, growth, and evaporation.”

In the later stages of the project, Salesky plans to use the simulations he develops to improve meteorologists’ basic understanding of interactions between turbulence and clouds and to understand how to better model cloud processes in weather and climate models.

The project also supports an educational component to increase graduate student enrollment in atmospheric sciences from students in other STEM fields and to develop lesson plans that connect engineering and physics concepts with atmospheric science.

“The majority of applicants to the meteorology graduate program have a bachelor’s degree in meteorology,” Salesky said. “We want to engage with students from physics, engineering, and other backgrounds to bring their experience with concepts like fluid dynamics to broaden the expertise in our field.”

Salesky is working with faculty partners at Cameron University in Lawton, the University of Science and Arts of Oklahoma in Chickasha, and the University of Central Oklahoma in Edmond to test lesson plans he will develop that teach physics concepts in a meteorological context. The faculty partners will then provide feedback on the lesson plans so that final versions can be made publicly available through the K20 LEARN Repository at OU’s K20 Center, a statewide research and development center. The collaboration with the Oklahoma universities will also support undergraduate research opportunities for students from the three schools to gain experience in atmospheric science research at OU.

Berrien Moore, dean of the College of Atmospheric and Geographic Sciences at OU, said, “Professor Salesky’s receipt of an NSF Career Award sounds and is highly technical and quite advanced. It is also very important in subject matter and extraordinary achievement for a young scientist.

“Not only will Scott advance important science, but he is also going to increase engagement of undergraduates from physics and other STEM fields in atmospheric science,” he added. “Both his scientific research and his teaching are focused on the future, and for that, we are both proud and thankful.”

How to resolve AdBlock issue?

How to resolve AdBlock issue?