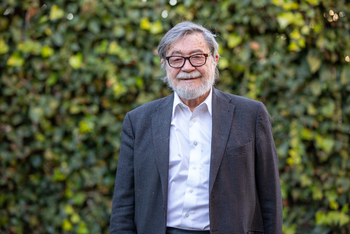

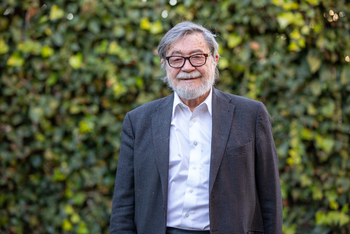

The BBVA Foundation Frontiers of Knowledge Award in Information and Communication Technologies has gone in this fourteenth edition to Judea Pearl for “bringing a modern foundation to artificial intelligence,” in the words of the selection committee

The BBVA Foundation Frontiers of Knowledge Award in Information and Communication Technologies has gone in this fourteenth edition to Judea Pearl for “bringing a modern foundation to artificial intelligence,” in the words of the selection committee. Pearl, a Professor of Computer Science and Director of the Cognitive Systems Laboratory at the University of California, Los Angeles (UCLA), has made conceptual mathematical, and formal contributions that enable AI programs to effectively interiorize two of the key resources we humans use to interpret the world and arrive at decisions: probability and causality. With the formal language he developed, these vital decision-making processes can be encoded into computer programs.

“By laying the mathematical foundations for probabilistic reasoning and the inference of causal relationships,” the citation continues, “Pearl constructed a framework not only for computer-based thought, but for fields spanning computer science, mathematics and statistics, epidemiology, and health, and the social sciences.”

The methods Pearl developed are taught today in every computer science school, and his books “have inspired sweeping new advancements across our understanding of reasoning and thought.” The “breadth and depth of his interdisciplinary impact” extends into multiple areas and applications, including “the development of fair and effective medical clinical trials,” the citation adds, as well as “in psychology, robotics, and biology.”

Pearl’s candidature was supported by eminent researchers in Europe and the United States, experts in computer science, artificial intelligence, psychology, economics, and philosophy. Among them is the Nobel laureate in Economics Daniel Kahneman, who says of his nominee: “During my long career I have known quite a few scholars who were recognized as giants in their field. I do not think I ever encountered one who evoked as much reverence and as much affection as Judea Pearl.”

Another of his nominators, Vinton G. Cerf, Vice President and Chief Internet Evangelist at Google (United States), recalls how he has “watched in admiration as his work has blazed new trails, often against conventional wisdom. Judea Pearl pressed on in his research in the face of considerable skepticism and his efforts were soundly rewarded.”

For nominator Ramón López de Mántaras, head of the Artificial Intelligence Research Institute (IIIA) of the Spanish National Research Council, “Pearl’s work is fundamental because it provides tools that will equip machines with the kind of cause-and-effect driven knowledge each one of us uses in our daily lives. He has devised a mathematical language whereby an AI system can ask not just ‘Why’ questions about the reasons things happen but also ‘What if’ questions, that is, what would have happened if things had been done differently. In medicine, for instance, we can ask what might have happened if we had prescribed the patient a different medication.”

A “less opaque” AI

Nominators are keen to emphasize that, compared to other lines of AI and statistics like deep learning or neural networks, Pearl’s contribution lends transparency that is vital in determining applications, such as decision-making in medicine and on legal or economic issues.

Vinton G. Cerf notes that Pearl’s latest books on causality and reasoning “are milestones in Bayesian analysis and machine learning. Pearl makes a powerful argument that without a causal model, the correlations discovered by deep neural networks will not bear much fruit.”

Pedro Larrañaga, Professor of Artificial Intelligence at the Universidad Politécnica de Madrid (UPM) has, over three decades, made a theoretical study of Pearl’s contributions, as well as applying them in areas that include bioinformatics, neuroscience, and industry. As he sees it, the awardee’s insights facilitate a “less opaque” AI that reveals by what reasoning intelligent systems have arrived at a given conclusion: “Many of the recent breakthroughs in AI have been based on neural networks that employ deep-learning algorithms. But these programs are like black boxes, where it’s not possible to trace how they came to solve the problem. Lined up on the other side are those of us who work habitually with Pearl’s Bayesian networks, in the belief that interpretability has to be a cornerstone of AI.”

Larrañaga is convinced that this is the direction in which AI will head, especially in areas like medicine or in decisions with legal or financial implications: “There is a growing call both in the U.S. and Europe for an AI ethics, and for processes to be audited. That is why I think Pearl’s approach will win the day because it offers more transparency.”

Ramón López de Mántaras expresses a similar view, adding that the tools provided by Pearl’s work “bring explainability, that is, traceability to the chain of reasoning followed by an AI system in reaching its conclusion. This kind of system is more complex, but well worthwhile since it gives far greater confidence. A doctor, for example, can know why the machine has diagnosed a patient a certain way or recommended a certain treatment.”

“A computational model of deep understanding”

In an interview shortly after hearing of the award, Pearl summed up what he considered to be his essential contribution to modern AI: “For the first time we can understand what ‘understanding’ means, for the first time we have a computational model of deep understanding.” Pearl defines such understanding as “being able to answer questions of three important levels: prediction [what will happen in such and such a circumstance]; the effect of actions; and explanation, why things happened the way they did and what would have happened had things not occurred as they did. These three levels of sophistication are what the language now captures and that is what we mean by an understanding.”

Probabilistic reasoning and the ability to establish causal relationships are two areas where Pearl’s contributions are of pivotal importance. For Larrañaga, these are properties of the human mind that pose central challenges in AI: “Probabilistic reasoning is something we use all the time to deal with uncertainty, what we don’t know. A doctor, for instance, will always seek out the likeliest explanation for a patient’s symptoms.”

Machines that take decisions in conditions of uncertainty

Pearl has this to say about his contribution to understanding the probabilistic reasoning of the human brain, so it can be replicated by a computer system: “Uncertainty is the commodity that prevails in everyday decision-making, even crossing the street or taking aspirin or speaking with your friend, and we have quite a hard time in computer science to enable a computer to deal with the barrage of noise and uncertain information that one has about the world. So my work has developed a calculus for probabilistic reasoning that allows the computer to handle all the kinds of noise information that it gets, put them together, and come out with a probability about the conclusions.”

In the 1980s Pearl devised the mathematical language needed to wed classical AI with probability theory. His book Probabilistic Reasoning in Intelligent Systems, published in 1988 and still a landmark today, presented the graphical models, or “Bayesian networks,” that have since become a mainstay of machine learning and modern statistics.

A Bayesian network, as the committee describes it, “is a representation of events and their likelihood of occurrence. Such graphs enable simple and graphical articulations of highly complex event networks and their probabilistic relationships, which enabled computers to solve real-world scenarios, discover latent dependencies, and predict outcomes through the propagation of probabilities. Bayesian networks have become intuitive, precise, and widely-used tools for decision-making under uncertainty.”

The importance of knowing how to infer causal relations between two phenomena cannot be overstated. It is a challenge for humans too, as Judea Pearl explains: “Causal relationships have been a very tough obstacle for both man and machine to handle because we do not have the language to capture the idea that the rooster’s crow does not cause the sun to rise, even though it comes before the sunrise and constantly predicts it.”

In his book Causality, published in 2002, Pearl introduced the world to causal calculus, which provides a formal framework for inferring causal relationships from data. “This enables us to understand how to predict the effect of interventions on outcomes,” the citation reads. “Pearl developed a mathematical language for distinguishing between causal relations and spurious correlations.”

Applications of the mathematization of causality

Getting machines to successfully detect causal relationships can unlock multiple applications. “Now we have a language to do that, so we can insert the knowledge that we have about the world and infer coherently as we do in algebra,” Pearls elaborates. “We infer the conclusion and the conclusion is proven to be correct if the assumptions are correct. The applications go from personalized medicine to handling an epidemic like COVID, putting together a variety of information sources from various parts of various countries, and coming out with a coherent conclusion based on the evidence that we have.”

On this point, Larrañaga relates how his team has supported health centers during the pandemic by applying Bayesian networks to decisions on which patient should be intubated, and in predicting the course of the disease: whether ICU admission would be required, or the length of hospitalization.

The BBVA Foundation centers its activity on the promotion of world-class scientific research and cultural creation and the recognition of talent.

The BBVA Foundation Frontiers of Knowledge Awards recognize and reward contributions of singular impact in science, technology, the humanities, and music, privileging those that significantly enlarge the stock of knowledge in a discipline, open up new fields, or build bridges between disciplinary areas. The goal of the awards, established in 2008, is to celebrate and promote the value of knowledge as a public good without frontiers, the best instrument at our command to take on the great global challenges of our time and expand the worldviews of individuals in a way that benefits all of humanity. Their eight categories address the knowledge map of the 21st century, from basic knowledge to fields devoted to understanding and interrelating the natural environment by way of closely connected domains such as biology and medicine or economics, information technologies, social sciences, and the humanities, and the universal art of music. They come with 400,000 euros in each of their eight categories, along with a diploma and a commemorative artwork created by artist Blanca Muñoz.

The BBVA Foundation has been aided from the outset in the evaluation of nominees for the Frontiers Award in Information and Communication Technologies by the Spanish National Research Council (CSIC), the country’s premier public research organization. CSIC appoints evaluation support panels made up of leading experts in the corresponding knowledge area, who are charged with undertaking an initial assessment of the candidates proposed by numerous institutions across the world, and drawing up a reasoned shortlist for the consideration of the award committees. CSIC is also responsible for designating each committee’s chair and participates in the selection of its members, thus helping to ensure objectivity in the recognition of innovation and scientific excellence.

How to resolve AdBlock issue?

How to resolve AdBlock issue?