Ultra-precise lasers can be used for optical atomic clocks, quantum supercomputers, power cable monitoring, and much more. But all lasers make noise, which researchers from DTU Fotonik want to minimize using machine learning.

The perfect laser does not exist. There will always be a bit of phase noise because the laser light frequency moves back and forth a little. Phase noise prevents the laser from producing light waves with the perfect steadiness that is otherwise a characteristic feature of the laser.

Most of the lasers we use daily do not need to be completely precise. For example, it is of no importance whether the frequency of the red laser light in the supermarket barcode scanners varies slightly when reading the barcodes. But for certain applications—for example in optical atomic clocks and optical measuring instruments—the laser must be stable so that the light frequency does not vary.

One way of getting closer to an ultra-precise laser is if you can determine the phase noise. This may enable you to find a way of compensating for it so that the result becomes a purer and more accurate laser beam.

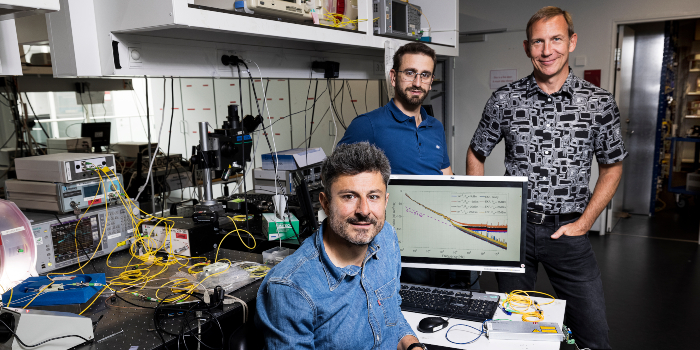

This is precisely what Professor Darko Zibar from DTU Fotonik is working on. He heads a research group called Machine Learning in Photonic Systems, where the goal is to develop and utilize machine learning to improve optical systems. Most recently, researchers from the group have characterized the noise from a laser system from the Danish company NKT Photonics with unprecedented precision.

“The question is how to measure that noise, and here we’ve developed the most accurate method available. We can measure much more precisely than others—our method has record-high sensitivity,” says Darko Zibar.

He has developed an algorithm that can analyze and find laser light patterns using machine learning, where a model for the noise is constantly being improved. On this basis, the group of researchers hopes to be able to develop a form of intelligent filter that continuously cleans the laser beam of noise.

Quantum mechanics set the limit

This is something that NKT Photonics can utilize in their optical measuring instruments, says Senior Researcher Poul Varming and his colleague Jens E. Pedersen, who has worked with the DTU researchers:

“We work with fiber lasers that emit constant light, and where the noise level is particularly low. Our most important task is to limit the noise, and—in terms of measuring technology—we had difficulty measuring noise at very high frequencies,” says Poul Varming and continues:

“But then we got in touch with Darko Zibar and his group, and we produced some lasers for them. The researchers were able to measure the noise up to very high frequencies, and the results actually contradict the established understanding of laser noise.”

With the new, improved measuring method, the researchers could thus show that the theoretical basis for calculating the noise was not quite in place. With the more detailed knowledge of the noise, engineers can better identify the parts of the laser system from which the noise emanates so that they know where to make improvements. The hope is that the machine learning system can also be used to attenuate the noise in real-time.

You cannot eliminate noise, because the laws of quantum mechanics set a very fundamental limit to how good a laser can be. Quantum noise is impossible to get rid of, but now it can at least be measured, says Darko Zibar:

“We can measure in the frequencies in which quantum noise is dominant. In this way, we can determine the fundamental noise and find out how much it contributes to the total noise. Once we know the fundamental limit for how good the laser can be, we can then figure out how to suppress the rest of the noise.”

“This is our next project—how we first identify and then suppress the noise, to obtain a laser that is only limited by quantum noise. This will enable us to produce some of the best lasers in the world.”

Optical cable feels vibrations

When the laser noise is known, it can be combated according to roughly the same principle used in noise-reducing headphones. Here, microphones pick up sound from the surroundings, and a signal is then sent in counter phase to the speakers so that the noise and the new signal eliminate each other, and the result is silence.

If the technique can be used to improve lasers by eliminating a large part of the noise so that the light frequency virtually does not vary, optical measuring instruments can have greater sensitivity and a longer range. At NKT Photonics, the technology can initially be used for distributed acoustic sensing, where a fiber optic cable is used as a sensor for measuring tiny vibrations. Distributed acoustic sensing can be used for various forms of monitoring. For example, an optical fiber can be laid along an oil or gas pipeline to ensure ultrafast detection of any ruptures. Or the technology can be used to monitor the fence around an airport or at a border—if a hole is cut in the fence, or someone tries to climb over it—the technology can not only signal what has happened but also pinpoint where it has occurred.

Such an optical monitoring system functions by a laser beam being sent into the optical fiber. During the process, a bit of the light is reflected by tiny impurities in the fiber. However, if the fiber is affected along the way, the properties of the reflected light also change, which is measurable. Even very faint vibrations can be picked up and located with great accuracy.

Monitoring of cables to the energy islands

If the new technology from DTU provides more effective laser light noise attenuation, distributed acoustic sensing can be used over somewhat longer distances than today. Both the sensitivity and the range of distributed acoustic sensing can be increased with the more precise lasers, and this may—for example—be needed when electricity is to be transported from the coming energy islands in the North Sea to the mainland. Here, the power cables can be monitored using the technology, so that any ruptures can be detected and repaired quickly. Today, it is a challenge that the range of the current systems is limited to a maximum of 50 km, and the distance to the energy island will be somewhat longer.

Poul Varming also mentions that several quantum technologies require extremely precise lasers. With noise-attenuated lasers, it becomes easier to develop ultra-precise optical atomic clocks and certain types of quantum computers, where lasers are used to cool individual atoms to close to absolute zero. The new generation of laser systems that may be the result of the researchers’ and engineers’ work thus offers great potential.

How to resolve AdBlock issue?

How to resolve AdBlock issue?  Some of this material is today still found largely unaltered in meteorites. The results of the study have far-reaching consequences for our understanding of the process that formed the planets Mercury, Venus, Earth, and Mars. The theory postulating that the four rocky planets grew to their present size by accumulating millimeter-sized dust pebbles from the outer Solar System is not tenable.

Some of this material is today still found largely unaltered in meteorites. The results of the study have far-reaching consequences for our understanding of the process that formed the planets Mercury, Venus, Earth, and Mars. The theory postulating that the four rocky planets grew to their present size by accumulating millimeter-sized dust pebbles from the outer Solar System is not tenable.