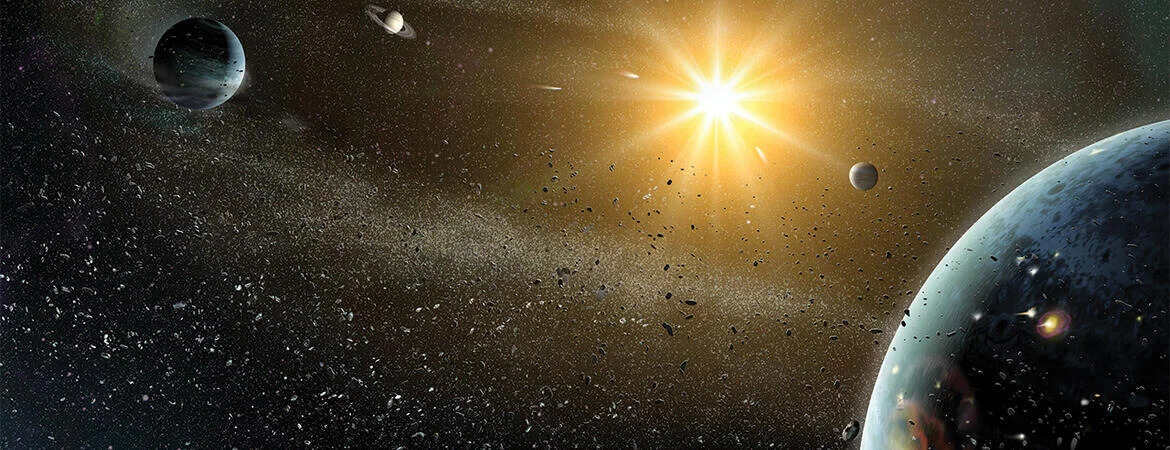

New research shows how giant gas planets harm habitable planets.

In a new study, UC Riverside researchers have demonstrated the harmful impact of giant planets on their Earth-like counterparts in other star systems. Unlike Jupiter, which protects our solar system, these giant planets cause chaos by displacing smaller planets from their orbits and disrupting their climates.

The study focuses on the HD 141399 star system, which serves as an excellent model for comparison with our solar system. The gravitational pull of the four giants in this system destabilizes the orbits of neighboring rocky planets. Multiple supercomputer simulations reveal that only a few areas within the habitable zone, which is defined as the range of distances from a star that allows for liquid water, have the potential to maintain stable Earth-like planets.

Lead author and UC Riverside astrophysicist, Stephen Kane, explains that only a select few areas within the habitable zone are safe from the giants' gravitational pull, which would otherwise knock a rocky planet out of its orbit and send it flying right out of the zone.

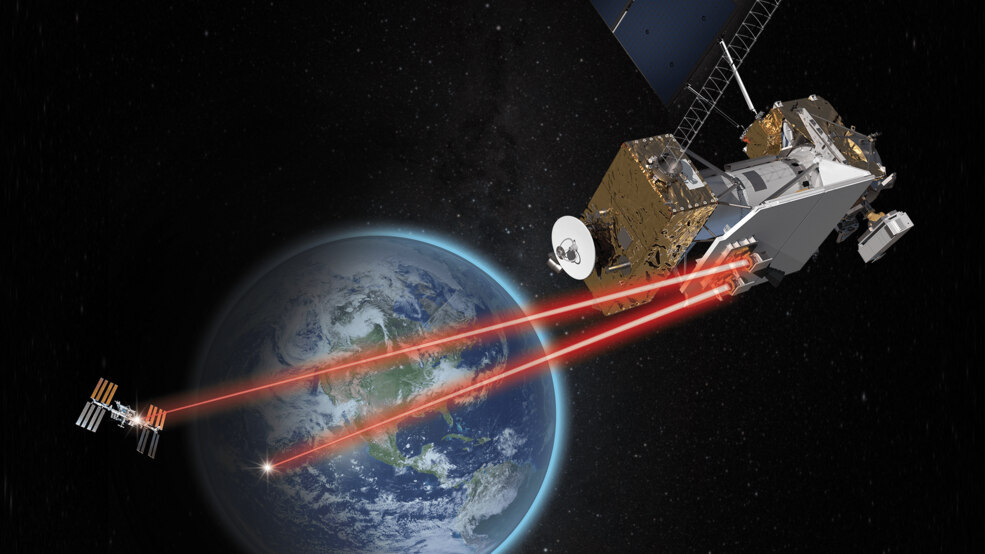

A second paper focuses on the star system GJ 357, located just 30 light years away from Earth. Although a planet named GJ 357 d resides within the habitable zone of this system, recent measurements suggest it may be larger than initially believed. This raises concerns about its terrestrial nature and its ability to support life as we know it. The study warns that if GJ 357 d is indeed significantly larger, it would prevent other Earth-like planets from coexisting within the habitable zone, forcing their orbits to become highly elliptical.

Co-author Tara Fetherolf, a UCR planetary science postdoctoral scholar, emphasizes that this paper is a warning not to assume that planets in the habitable zone are automatically capable of hosting life.

The study shows how rare the circumstances required to host life in the universe are. It is a reminder of how fortunate we are to have a planetary configuration that supports stability and the potential for life in our solar system.

While Jupiter safeguards our planet from comets and asteroids, creating a stable environment for life on Earth, the research reveals the vulnerability of other star systems. The presence of giant gas planets does not guarantee the protection or sustainability of neighboring Earth-like exoplanets.

These findings contribute to the ongoing quest for understanding the conditions necessary for life elsewhere in the universe. The research study is a groundbreaking stepping stone in our pursuit to unravel the mysteries of distant star systems and the potential habitability of exoplanets.

How to resolve AdBlock issue?

How to resolve AdBlock issue?