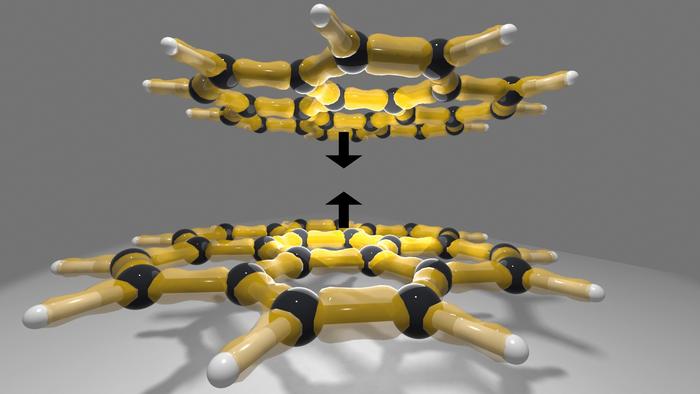

Scientists at the Vienna University of Technology (TU Wien) have developed a high-precision computational approach to enhance the understanding of how large molecules interact, specifically through the weak but pervasive van der Waals forces that enable geckos to adhere to surfaces. This breakthrough is anticipated to drive advancements in materials science, pharmaceuticals, and energy storage by providing greater reliability in predicting molecular behavior.

A puzzle solved

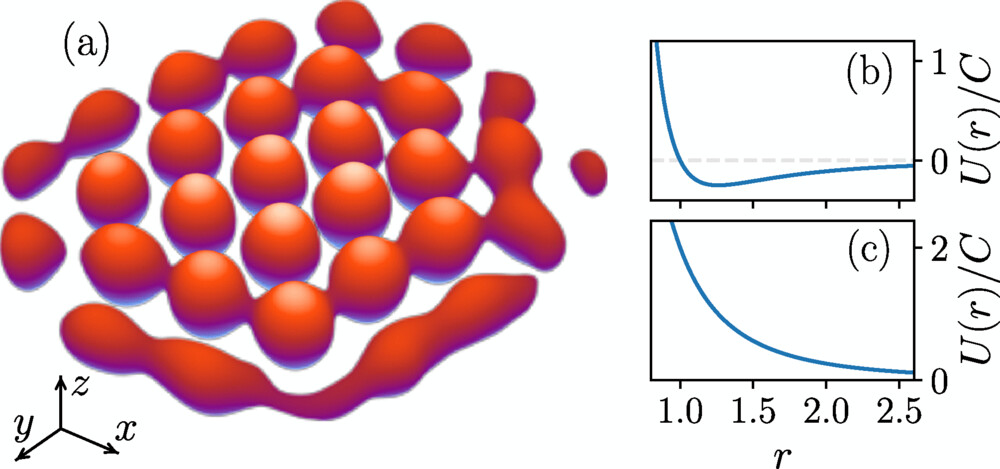

For many years, researchers in quantum chemistry have relied on two prominent computational methods: the "gold standard" coupled-cluster theory, specifically CCSD(T), and the stochastic diffusion quantum Monte Carlo (DMC) method. While both methods have provided near-benchmark accuracy for small molecules, discrepancies in predicted interaction energies emerged when applied to large, highly polarizable molecular systems.

The TU Wien team, led by Prof. Andreas Grüneis, along with Tobias Schäfer, Andreas Irmler, and Alejandro Gallo, investigated this divergence. They identified that CCSD(T) systematically overestimated binding energies in large molecular complexes, predicting stronger molecular interactions than were actually present.

Their new computational variant, designated CCSD(cT), incorporates selected higher-order corrections to the treatment of triple particle-hole excitations, which are significant for large, polarizable systems. This refinement effectively mitigates over-binding and aligns the computed values with the DMC results. The authors demonstrate in their study that CCSD(cT) achieves "chemical accuracy" (within approximately 1 kcal/mol) even for complexes comprising over 100 atoms.

The super-computational method: what makes it special

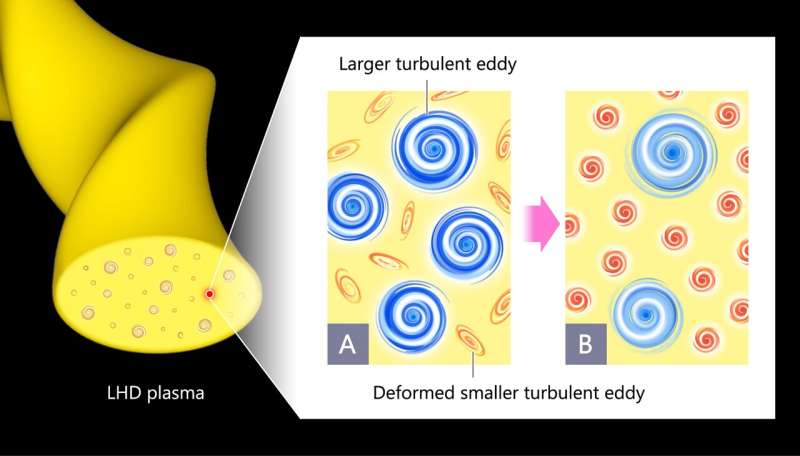

The key to the breakthrough isn’t simply more powerful hardware, but a clever adaptation of computation techniques and basis sets that fully exploit today’s supercomputing infrastructure. The authors report three major enablers:

- Massive parallelization – The workflow was implemented on high-performance computing (HPC) clusters using up to 50 compute nodes (each with 128 cores) for their largest tasks. The ability to distribute the workload allowed the team to avoid many of the local‐correlation approximations that earlier coupled-cluster calculations used to save time but at the cost of accuracy.

- Plane-wave basis sets – Instead of the conventional Gaussian-type atom-centered orbitals, the team employed a plane-wave basis set (commonly used in solid-state physics) for large molecular complexes, along with natural‐orbital truncation and singular‐value decomposed Coulomb integral factorization. These choices allowed unbiased and systematically improvable estimates for the interaction energies and reduced basis‐set error.

- Refined triple-excitation correction (cT) – The heart of the improvement is the correction to CCSD(T)’s (T) approximation. Summary: (T) neglects certain diagrams—specifically terms like ([ [\hat V, \hat T_2 ], \hat T_2 ]), which are small for small, weakly polarizable molecules, but become significant when molecules are large and very polarizable. By including these terms in CCSD(cT), the team corrected the systematic over-binding of CCSD(T).

The method combines computational power with refined theory, effectively merging supercomputing and quantum chemistry. This "super-computational method" enables the reliable analysis of molecular systems that were previously too complex for theoretical models.

Why this matters: optimistic outlook

The implications are far-reaching:

- Materials science & energy: Many next-generation materials, hydrogen storage media, novel catalysts, 2D materials, surfaces, rely on noncovalent interactions between large molecular or extended systems. Having accurate benchmark interaction energies means better design of materials from first principles. The TU Wien team note the importance for hydrogen binding energy prediction, drug crystallization, etc.

- Pharmaceuticals & biomolecules: Large molecules with many atoms—think proteins, drug–target systems, and crystals—are now becoming accessible to reliable computational modeling. That means faster, smarter virtual screening, better understanding of how drugs bind, how crystals form, and more.

- AI and machine learning models: Accurate benchmark data is the lifeblood of machine learning in chemical and materials modelling. The new method generates high‐quality reference data for large molecules, which can then train ML models for faster predictions down the line. (“Our results show that even well-established methods must be continuously re-examined to keep pace…” says the TU Wien release.

- Science advancing: Perhaps most exciting is the idea that this demonstrates a new frontier: we are expanding the domain of accuracy in many-electron theory to ever larger systems. As the authors put it, “we are witnessing an unremitting expansion of the frontiers of accurate electronic structure theories to ever larger systems … which … has the potential to transform the paradigm of modern computational materials science.”

In short, the method opens doors. With ever-growing computational power and clever theoretical innovation, the old boundary of “accurate only for small molecules” is being lifted. That means more realistic modelling of real‐world systems, faster innovation in materials and biotech, and a hopeful horizon for computational science.

Looking ahead

Of course, challenges remain. The computations reported still required significant supercomputing resources (e.g., ~100k CPU hours for the benchmark coronene dimer), and the authors note that full canonical CCSD(cT) for still larger systems is not yet feasible—they use a fitted approximation (CCSD(cT)-fit) for the largest complexes they studied.

But the path forward is clear: local correlation approaches and low-scaling methods can inherit the improvements of CCSD(cT), bringing accuracy to more systems at lower cost. As the paper states, “The more accurate CCSD(cT) approximation can directly be transferred to computationally efficient low-scaling and local correlation approaches, which will substantially advance…”

In an optimistic note, the “gold standard” itself has been improved. The TU Wien team shows that even widely-trusted methods must evolve—and by making that evolution, they are advancing the entire field. As we explore ever more complex molecular systems, from new energy materials to advanced drugs, having reliable computational methods is not just helpful; it is essential. With this breakthrough, the future of computational chemistry and materials science looks brighter than ever.

How to resolve AdBlock issue?

How to resolve AdBlock issue?