Columbia engineering team combines quantum mechanics, machine learning to predict chemical reactions

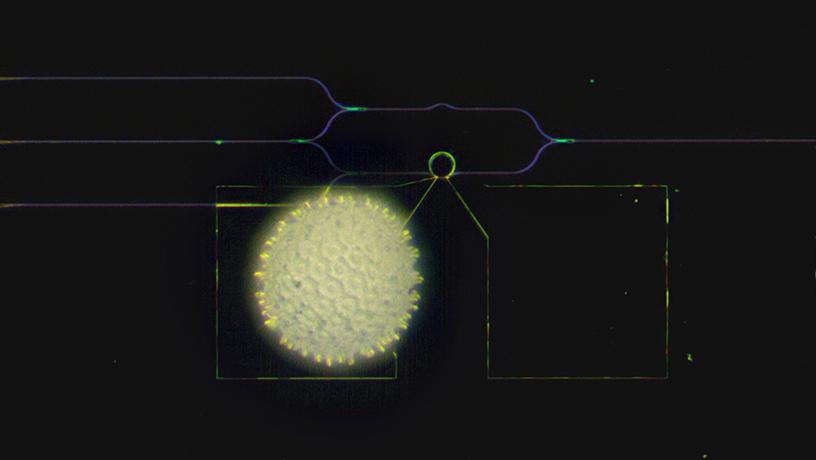

Extracting metals from oxides at high temperatures is essential not only for producing metals such as steel but also for recycling. Because current extraction processes are very carbon-intensive, emitting large quantities of greenhouse gases, researchers have been exploring new approaches to developing “greener” processes. This work has been especially challenging to do in the lab because it requires costly reactors. Building and running computer simulations would be an alternative, but currently, there is no computational method that can accurately predict oxide reactions at high temperatures when no experimental data is available.

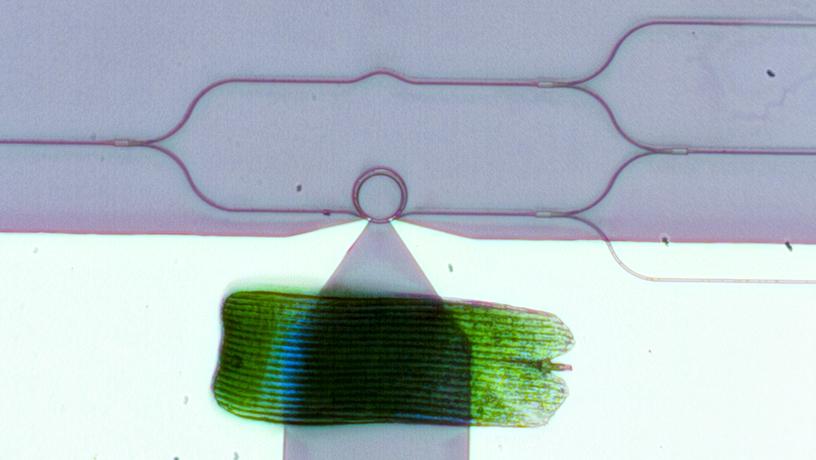

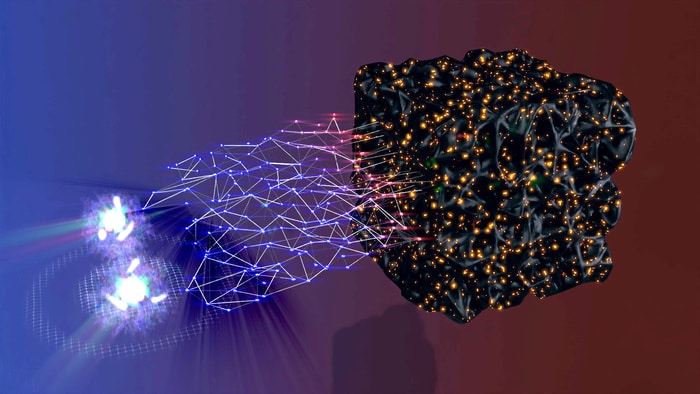

A Columbia Engineering team reports that they have developed a new computation technique that, through combining quantum mechanics and machine learning, can accurately predict the reduction temperature of metal oxides to their base metals. Their approach is computationally as efficient as conventional calculations at zero temperature and, in their tests, more accurate than computationally demanding simulations of temperature effects using quantum chemistry methods. The study was led by Alexander Urban, assistant professor of chemical engineering.

“Decarbonizing the chemical industry is critical if we are to transition to a more sustainable future, but developing alternatives for established industrial processes is very cost-intensive and time-consuming,” Urban said. “A bottom-up computational process design that doesn’t require initial experimental input would be an attractive alternative but has so far not been realized. This new study is, to our knowledge, the first time that a hybrid approach, combining computational calculations with AI, has been attempted for this application. And it’s the first demonstration that quantum-mechanics-based calculations can be used for the design of high-temperature processes.”

The researchers knew that, at very low temperatures, quantum-mechanics-based calculations can accurately predict the energy that chemical reactions require or release. They augmented this zero-temperature theory with a machine-learning model that learned the temperature dependence from publicly available high-temperature measurements. They designed their approach, which focused on extracting metal at high temperatures, to also predict the change of the “free energy'' with the temperature, whether it was high or low.

“Free energy is a key quantity of thermodynamics and other temperature-dependent quantities can, in principle, be derived from it,” said José A. Garrido Torres, the study’s first scholar who was a postdoctoral fellow in Urban’s lab and is now a research scientist at Princeton. “So we expect that our approach will also be useful to predict, for example, melting temperatures and solubilities for the design of clean electrolytic metal extraction processes that are powered by renewable electric energy.”

“The future just got a little bit closer,” said Nick Birbilis, Deputy Dean of the Australian National University College of Engineering and Computer Science and an expert for materials design with a focus on corrosion durability, who was not involved in the study. “Much of the human effort and sunken capital over the past century has been in the development of materials that we use every day – and that we rely on for our power, flight, and entertainment. Materials development is slow and costly, which makes machine learning a critical development for future materials design. For machine learning and AI to meet their potential, models must be mechanistically relevant and interpretable. This is precisely what the work of Urban and Garrido Torres demonstrates. Furthermore, the work takes a whole-of-system approach for one of the first times, linking atomistic simulations on one end engineering applications on the other – via advanced algorithms.”

The team is now working on extending the approach to other temperature-dependent materials properties, such as solubility, conductivity, and melting, that are needed to design electrolytic metal extraction processes that are carbon-free and powered by clean electric energy.

How to resolve AdBlock issue?

How to resolve AdBlock issue?