WSU study pinpoints molecular weak spot in virus entry; supercomputing helps reveal the hidden dance

In a discovery that elegantly bridges biology and computation, researchers at Washington State University (WSU) have uncovered a microscopic "Achilles' heel" in how viruses invade human cells, with supercomputing-informed simulations playing a key role. While it appears to be a molecular biology breakthrough at first glance, a closer look reveals how computational science steered the experiment toward this target much faster than trial-and-error alone could have.

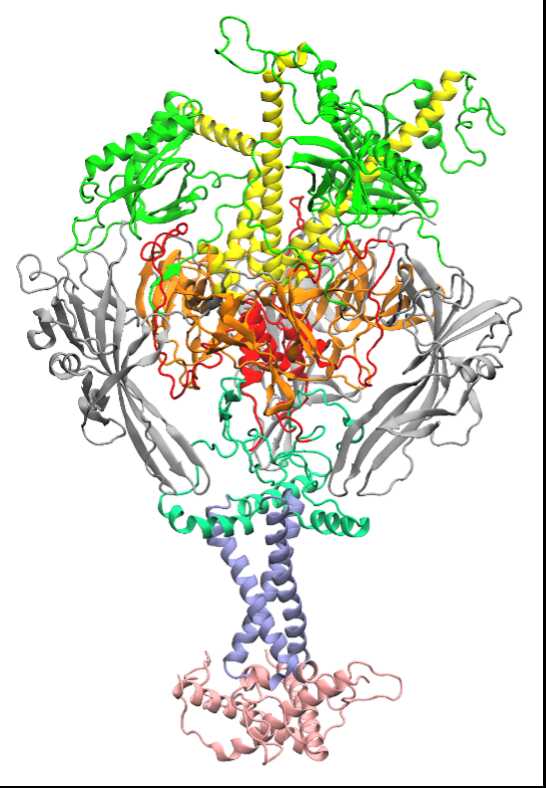

At the heart of the study is glycoprotein B, a complex protein that many viruses, including herpesviruses, use as a molecular grappling hook. This protein changes shape to fuse the viral membrane with a host cell’s membrane, allowing the virus to enter the host cell and begin its infectious cycle. Historically, researchers have known that fusion proteins like glycoprotein B are critical to infection, but pinpointing which interactions matter most among thousands of possible atomic-scale contacts is like searching for a needle in a haystack.

Simulations Sift the Signal from the Noise

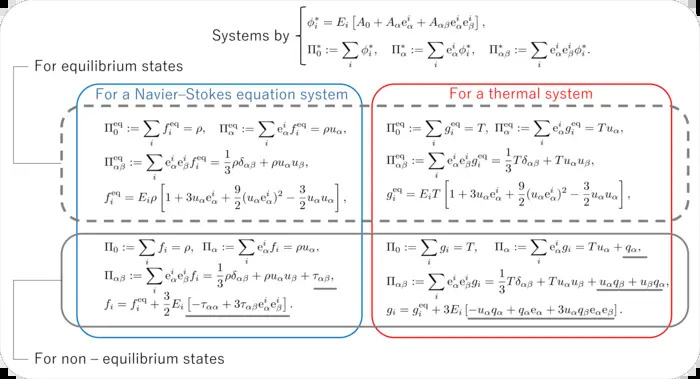

WSU’s team, a collaboration between mechanical engineers and veterinary microbiologists, leveraged artificial intelligence and large-scale simulation to navigate this haystack. Instead of testing each possible interaction experimentally (a process that could take years), they used machine learning to screen thousands of potential amino-acid contacts inside the fusion protein. The algorithms flagged the interactions that most strongly influence the protein’s ability to change shape and initiate membrane fusion.

That's where the supercomputing mindset comes in. While the press release doesn't explicitly name a specific HPC center or piece of hardware, the workflow described, training machine learning models on massive combinatorial data from protein structures and simulating dynamic interactions at the atomic scale, is precisely the sort of work that depends on high-performance computing. Without it, biologically relevant simulations of proteins in motion would be prohibitively slow.

Leveraging computationally derived insights, the team introduced a targeted mutation in one of the key amino acids identified by their model. The outcome was striking: viruses with the modified glycoprotein were unable to fuse with cells and gain entry. The invasion was effectively halted.

"This demonstrates how computational filtering can accelerate the pace of discovery," stated Jin Liu, the paper's corresponding author and professor in the School of Mechanical and Materials Engineering. Without these tools, the team believes the critical interaction could have remained hidden for years amidst the molecular background noise.

Why Supercomputing Matters Beyond Speed

High-performance computing isn’t just about running simulations faster. In complex biological systems, it’s about making the impossible tractable. Here’s how:

- Exploring vast interaction networks: The space of possible amino-acid interactions in a protein like glycoprotein B is enormous. Computational analysis helps narrow this down with statistical precision.

- Coupling dynamics with structure: Proteins are not static ornaments; they breathe, flex, and contort. Supercomputing helps us simulate these fluctuations, data that would otherwise be invisible.

- Guiding biological experiments: By pointing experiments toward the most promising hypotheses, computation accelerates the entire research cycle.

The elegance of the WSU approach lies in its integration of wet-lab biology with in silico discovery, where simulations enhance rather than replace experiments.

Beyond This Study, Toward Broad Antiviral Insight

Blocking viral entry is a key strategy in antiviral design. Whether targeting influenza, HIV, herpesviruses, or coronaviruses, the initial molecular interaction between a virus and a host cell often determines the outcome. If computational methods can systematically identify the weakest points in these interactions, the implications for future drug development are significant.

Supercomputing is increasingly central to this effort. Exascale simulations of viral proteins enable researchers to observe molecular motions occurring within microseconds, dynamics that would otherwise remain unseen.

The WSU discovery doesn’t yet translate into a new drug or therapy; far more work lies ahead to understand how the mutated interaction affects the virus's full structural behavior in real biological systems. But it does represent a proof of concept: guided by computation, we can unmask the subtlest viral strategies and pre-emptively strike at them.

In a world still deeply familiar with the consequences of viral outbreaks, this kind of synergy between supercomputing and biology isn’t just intellectually exciting, it’s potentially transformative.

How to resolve AdBlock issue?

How to resolve AdBlock issue?