Enzymes have the potential to transform the chemical industry by providing green alternatives to a slew of processes. These proteins act as biological catalysts, and with the help of molecular engineering, they can make naturally occurring reactions shift into turbo mode. Tailor-made enzymes could, for example, lead to nonpolluting drug manufacture; they could also safely break down pollutants, sewage, and agricultural waste, and then turn them into biofuel or animal feed.

A new Weizmann Institute of Science study, published today in Science, brings this vision closer to reality. In their report, the researchers, headed by Prof. Sarel Fleishman of the Biomolecular Sciences Department, unveil a computational method for designing thousands of different active enzymes with unprecedented efficiency by assembling them from engineered modular building blocks.

{media id=296,layout=solo}

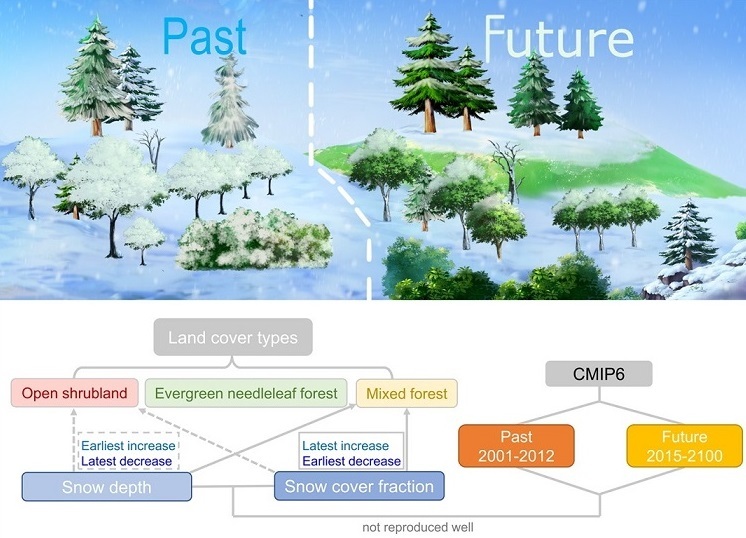

Biochemists typically design new enzymes by randomly tweaking the DNA of naturally existing ones and screening the resultant variants for a desired activity, a process that can be extremely time-consuming. Fleishman’s team came up with the idea of generating large numbers of vastly diverse enzymes by breaking down natural ones into constituent fragments that can then be altered and recombined in various ways.

The inspiration for this new approach came from within: our immune system, which is capable of making billions of different antibodies – proteins that in principle can counter any harmful microorganism – just from the bits dictated by a relatively small number of genes. “Antibodies are the only family of proteins in nature known to be generated in a modular way,” Fleishman explains. “Their huge diversity is achieved by recombining preexisting genetic fragments, similar to how a new kind of electronic device is assembled from preexisting transistors and processing units.”

Could enzymes be generated, like antibodies, from lab-designed modular fragments that combine into larger structures?

Rosalie Lipsh-Sokolik, a Ph.D. student who led the study in Fleishman’s lab, started experimenting with a family of several dozen enzymes that break down xylan, a common component of plant cell walls. “If we manage to boost the activity of these enzymes, they might be used for breaking down plant compounds such as xylan and cellulose into sugars, which in turn can help generate biofuels,” Lipsh-Sokolik says. “Instead of disposing of agricultural waste, for example, we should be able to turn it into an energy source.”

Lipsh-Sokolik developed an algorithm that uses physics-based protein design calculations together with a new machine-learning model. The algorithm broke down each of the different variants of xylan breaking enzyme sequences into several fragments and then introducing dozens of mutations into those pieces – all in ways that maximized the potential compatibility of the different bits. It then assembled fragments into different combinations and selected a million sequences of encoded enzymes that were deemed to be stable.

The next step for Lipsh-Sokolik and colleagues was to synthesize a million actual enzymes from these supercomputer models and test them in the lab. To their surprise, 3,000 were confirmed to be active. “The first time we looked at the experimental results, we were amazed,” Fleishman says. “The 0.3 percent success rate is not high, but the sheer number of different active enzymes we got was staggering. In typical protein design and engineering studies, you see maybe a dozen active enzymes.”

Armed with an extensive repertoire of enzymes, the researchers then asked a key question that interests protein researchers: What molecular features distinguish active enzymes from inactive ones?

Using machine learning tools, Lipsh-Sokolik examined about a hundred features that characterize enzymes and used the ten most promising ones to create an activity predictor. When she incorporated this activity predictor into her algorithm and repeated the design experiment with the xylan-breaking enzymes, this second-generation repertoire had as many as 9,000 enzymes that broke down xylan and another 3,000 that could break down cellulose, adding up to a total of 12,000 active enzymes. This was a tenfold increase in success rate over the initial experiment, and an unparalleled feat in the history of protein design: The team managed, in a single experiment, to design more potentially active enzymes than standard methods could produce in a decade.

Not only that, the thousands of these active variants were exceptionally diverse in terms of both sequence and structure, which suggests that they may perform a wide variety of new functions.

“When you can create enzymes with such high levels of activity using a completely automated method that you now know is also incredibly reliable, that is really good news,” Lipsh-Sokolik says. Fleishman says the new Weizmann method, which the scientists call CADENZ – short for Combinatorial Assembly and Design of Enzymes – can, theoretically, be applied to any family of proteins. His team is already exploring its applications to the generation of new, improved antibodies or the creation of variants of the fluorescent proteins widely used as labels in biology.

“One of my goals is to change the way people engineer enzymes, antibodies, and other proteins,” Fleishman says. “Protein engineering is becoming a central part of the economy and public health: Industrial enzymes are proteins; antibodies and vaccines are also proteins. We need to be able to optimize them and to generate new ones in a robust and reliable way.”

The study’s participants included Dr. Olga Khersonsky and Shlomo-Yakir Hoch of the Weizmann Institute of Science’s Biomolecular Sciences Department; Drs. Sybrin P. Schröder and Casper de Boer and Prof. Hermen S. Overkleeft of Leiden University, the Netherlands; and Prof. Gideon J. Davies of the University of York, UK.

How to resolve AdBlock issue?

How to resolve AdBlock issue?