The study reveals whistler-mode chorus waves similar to the Earth's detected on Mars, validating the role of magnetic field Inhomogeneity in frequency sweeping phenomenon.

Chinese scientists have reproduced the observed whistler mode chorus waves on Mars using data from the Mars Atmosphere and Volatile Evolution (MAVEN) mission and compared them with phenomena on the Earth. They found that both Mars and Earth exhibit whistler mode chorus waves triggered by nonlinear processes with the key role played by background magnetic field inhomogeneity in frequency sweeping.

The study provides crucial support for understanding chorus waves in the Martian environment and verifies the previously proposed "Trap-Release-Amplify" (TaRA) model under more extreme conditions.

Whistler-mode chorus waves are electromagnetic wave emissions widely presented in planetary magnetospheres. When their electromagnetic signals are converted into sound, they resemble the harmonious chorus of birds in the early morning, hence the name "chorus waves." Chorus waves can accelerate high-energy electrons in space through resonance, leading to a rapid increase in electron flux in Earth's radiation belts during geomagnetic storms. Additionally, they scatter high-energy electrons into the atmosphere, creating diffuse and pulsating auroras.

One characteristic of chorus waves is their narrowband frequency sweeping structure. The excitation mechanism of this sweeping structure has been of great interest for decades, and scientists have proposed various theoretical models. However, there has been an ongoing debate regarding why frequency sweeping occurs in chorus waves and how to calculate the sweeping frequency. One central point of contention is whether the background magnetic field inhomogeneity plays a crucial role in frequency sweeping and how it affects the sweeping phenomenon.

The TaRA model, previously proposed by a team from the University of Science and Technology of China of the Chinese Academy of Sciences, is based on modern plasma physics theories and suggests that the frequency sweeping of chorus waves in the magnetosphere is the result of the combined effects of nonlinear processes and background magnetic field inhomogeneity. The model provides a corresponding formula for calculating the sweeping frequency. However, the variation in magnetic field inhomogeneity in Earth's magnetosphere is limited, making it difficult to test the TaRA model in a larger parameter space.

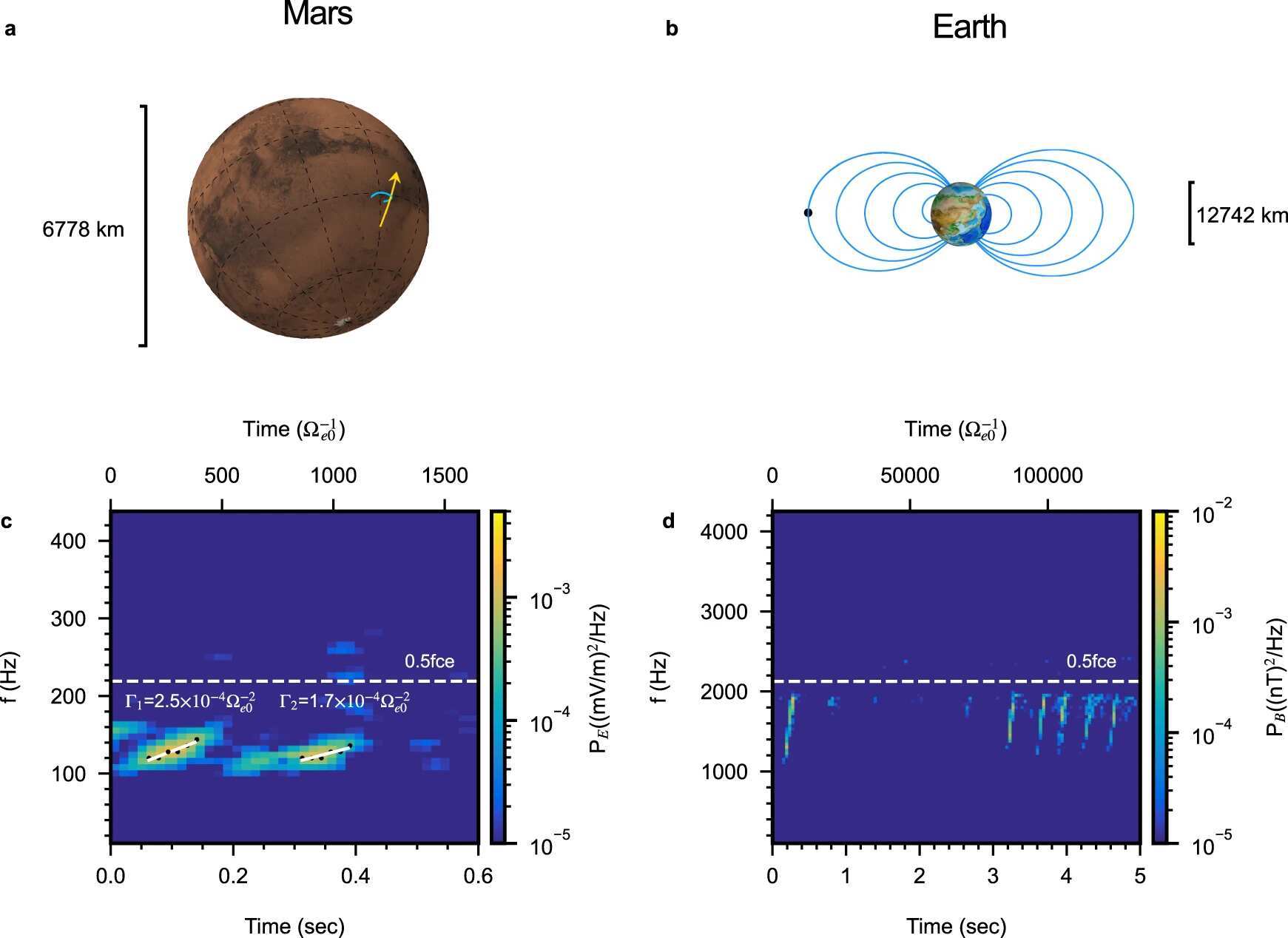

There exist distinct magnetic field environments between Mars and Earth. The Earth possesses a global dipole-like magnetic field, while Mars only has localized remnant magnetization. In the remnant magnetization environment of Mars, similar chorus wave events have also been observed by the MAVEN satellite. The calculations reveal a difference of five orders of magnitude in background magnetic field inhomogeneity between Mars and Earth. By comparing wave events observed on Earth and Mars, the previously proposed TaRA model can be tested under more extreme conditions.

To validate this model, in this study, scientists from the University of Science and Technology of China and their collaborators observed the particle distribution on Mars using the MAVEN satellite. They combined it with the corresponding Martian crustal remnant magnetic field model.

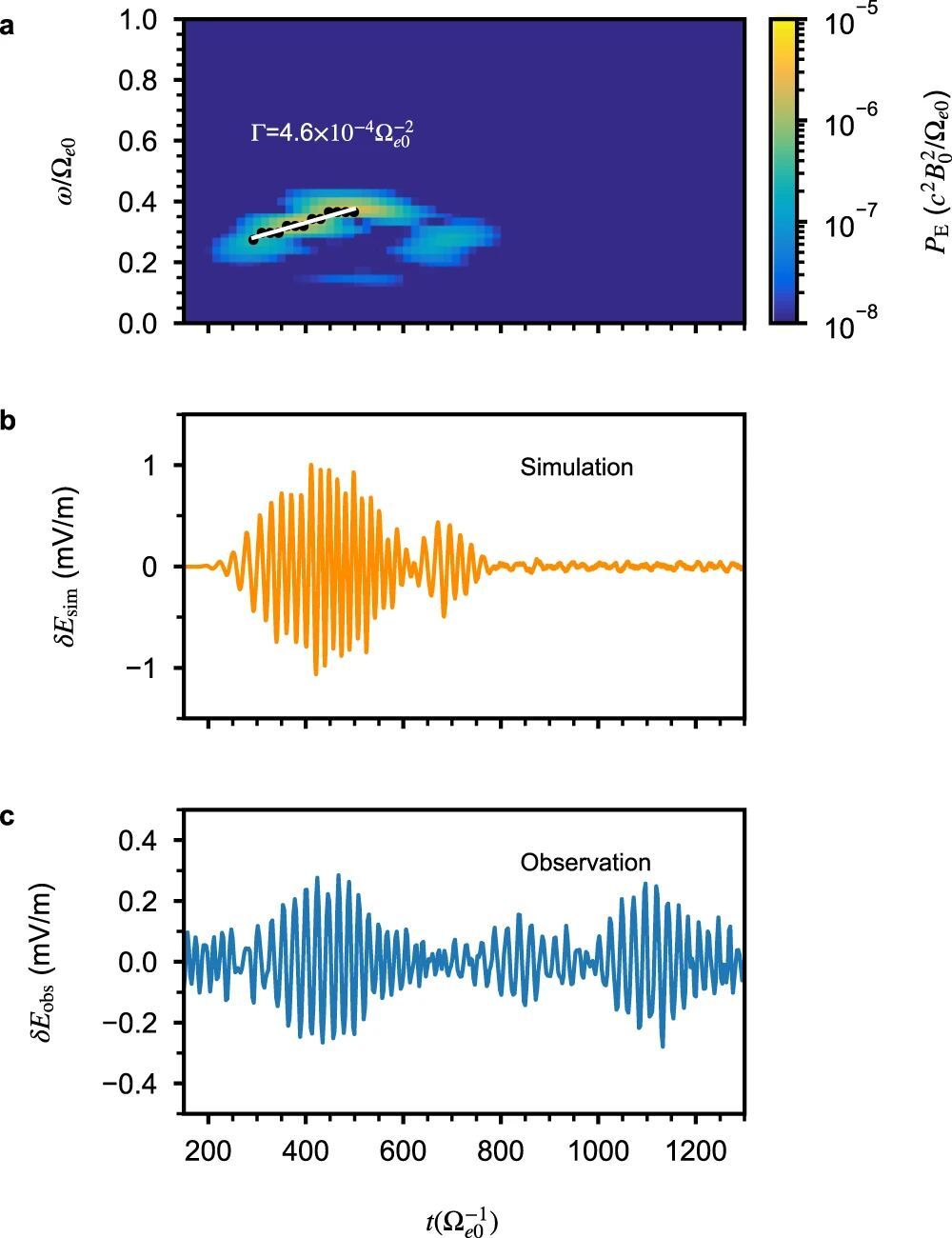

Employing a first-principles particle simulation method, scientists reproduced the observed chorus wave phenomena on Mars. Through the analysis of particle phase space distribution, they confirmed that the sweeping process of these waves is consistent with that of chorus waves on Earth, both triggered by nonlinear processes.

Furthermore, scientists used two different methods provided by the TaRA model to calculate the sweeping frequency of chorus waves and compared them with the observation and simulation results. The results demonstrated high consistency between the sweeping frequencies calculated based on nonlinear processes and background magnetic field inhomogeneity and the supercomputer simulation results.

These findings indicated that although Mars and Earth possess distinct magnetic and plasma environments, the observed chorus wave phenomena on Mars follow the same fundamental physical processes as those in Earth's magnetosphere. This study validated the wide applicability of the TaRA model in describing the sweeping physical processes of chorus waves under extreme conditions with a five-order difference in magnetic field inhomogeneity, which confirms the existence of chorus waves on Mars, and provides support for testing and applying the TaRA model under extreme conditions.

How to resolve AdBlock issue?

How to resolve AdBlock issue?