The UK Secretary of State for Science, Innovation, and Technology Chloe Smith has announced a series of investments to develop trustworthy artificial intelligence (AI) research.

- £54 million investment to support the UK’s AI and data science workforce and develop trustworthy and secure AI

- new Geospatial Strategy to drive growth through technologies including AI, satellite imaging, and real-time data

- a new pilot program backed by up to £50 million in government funding to accelerate new research ventures with industry, philanthropic organizations, and the third sector

Universities across the UK are set to benefit from a substantial £54 million investment in their work to develop cutting-edge artificial intelligence (AI) technology, Technology Secretary Chloe Smith announced today.

Delivered through UK Research and Innovation (UKRI), £31 million of the funding will be used to back ground-breaking research at the University of Southampton to establish responsible and trustworthy AI, bringing together the expertise of academia, business, and the wider public to explore how responsible AI can be developed and utilized while considering its broader impact on wider society.

The Technology Secretary unveiled the package in a keynote speech at London Tech Week, advancing efforts to secure the UK’s position as a science and tech superpower, fuel economic growth, and create better-paid jobs. The Tech Secretary also announced the launch of the UK Geospatial Strategy 2030, which will unlock billions of pounds in economic benefits through harnessing technologies including AI, satellite imaging, and real-time data.

Technology Secretary Chloe Smith said: "Despite our size as a small island nation, the UK is a technology powerhouse. Last year, the UK became just the third country in the world to have a tech sector valued at $1 trillion. It is the biggest in Europe by some distance and behind only the US and China globally.

"The technology landscape, though, is constantly evolving, and we need a tech ecosystem that can respond to those shifting sands, harness its opportunities, and address emerging challenges. The measures unveiled today will do exactly that.

"We’re investing in our AI talent pipeline with a £54 million package to develop trustworthy and secure artificial intelligence, and putting our best foot forward as a global leader in tech both now, and in the years to come."

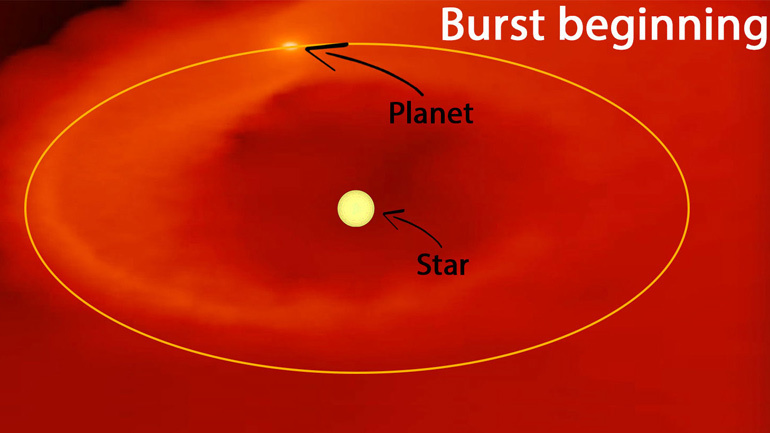

AI developments present enormous opportunities in almost every aspect of modern life, particularly in addressing climate change challenges and pursuing net-zero targets. As part of this investment, the remaining £13 million will be used to fund 13 projects based at universities across the UK to develop pioneering AI innovations in sustainable land management, efficient CO2 capture, and improved resilience against natural hazards.

The commitments follow the announcement in March of £117 million in funding for Centres for Doctoral Training in AI, with a further £46 million to support Turing AI Fellowships to develop the next generation of top AI talent.

In pursuit of the UK’s science and technology superpower ambitions, Chloe Smith has also announced the Department for Science, Innovation, and Technology will shortly launch an open call for proposals to pilot new, collaborative approaches to scientific research in the UK, backed by £50 million in government funding. The money will drive investment and partnership with industry and further afield to fund the ideas and innovations which aren’t currently addressed in the UK research sector and open in the coming weeks. This will benefit the UK’s research community by allowing organizations to explore the viability of new models for performing research in specific areas, bypassing the large start-up costs normally needed to set up an entirely new institution.

The UK Science and Technology framework sets out how the UK will respond to emerging and critical technologies. Geospatial technology is one such example, and the new UK Geospatial Strategy, which will launch tomorrow (Thursday 15 June), will drive the use of location data right across the economy including property, transportation, and beyond, fuelling growth through innovation.

Professor Dame Ottoline Leyser, Chief Executive of UK Research and Innovation (UKRI) said: "UKRI is investing in the people and technologies that will improve lives for people in the UK and around the world. By supporting research to develop AI that is useful, trustworthy, and trusted, we are laying solid foundations on which we can build new industries, products, and services across a wide range of fields.

"Working through cross-disciplinary partnerships we will ensure that responsible innovation is integrated across all aspects of the work as it progresses."

The measures announced today will fuel the government’s mission to make the UK the most innovative economy in the world and build a technology ecosystem that cements the UK’s place at the frontier of global tech development.

How to resolve AdBlock issue?

How to resolve AdBlock issue?